WHAT IS GOOGLE SUPPLEMENTAL INDEX OR “GOOGLE SNOT”

Imagine you walk into a clothing store. On the racks and mannequins—there are new arrivals. On the shelves, you see neatly folded items from past collections. There’s a table with sale items—these are also clearly visible. The most noticeable ones are seasonal offers. And few people realize that in the storage room, there are items that haven’t been advertised for a long time. You can still buy them, but no one asks about these clothes because they are hidden. For the store, it’s much better to make space for popular clothes that will bring profit to the brand.

For a long time, the same situation happened with the supplemental index of search engines. Google and other search engines hid web pages that didn’t meet important quality standards. The pages could be too old, uninteresting, or duplicate. And if a website had too many of these pages—it affected the overall ranking of the site.

What Are We Talking About?

Main and Supplemental Index in Google

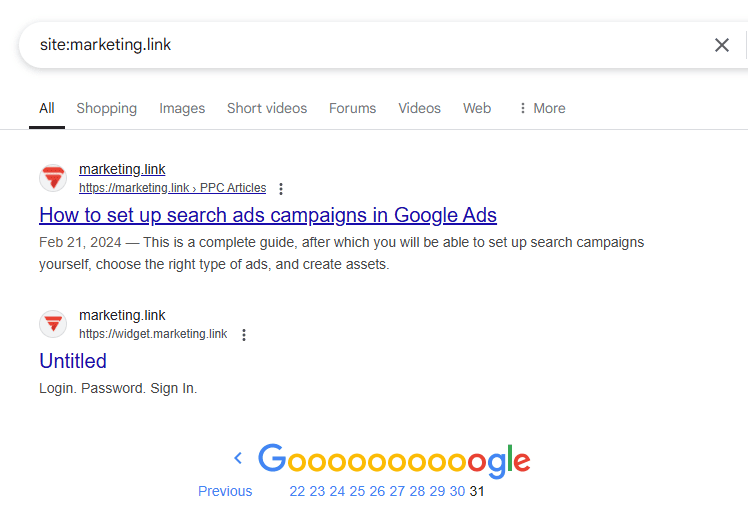

Until 2007, there was a secondary database—Google Supplemental Index (SI). Let’s look at what factors made web pages end up in that storage and what website owners and SEO specialists still need to consider today. In the past, it was possible to detect pages in the supplemental index by using the “site” operator with a website’s URL, for example: site:marketing.link. Today, this command only shows the number of indexed pages—if you scroll through all the search results for your query.

Previously, Google would show a message at the end of the search results saying that some results were hidden.

That’s what the supplemental index used to look like.

But in 2006, Google completely changed how it crawled and indexed supplemental results.

“…we’re indexing URLs with more parameters and continuing to apply fewer restrictions to the sites we crawl. As a result, Supplemental Results are fresher and more comprehensive than ever. We’re also working on showing more Supplemental Results, ensuring that every query can search the supplemental index, and plan to roll this out over the summer. So the difference between the main and the supplemental index continues to narrow.”—“Supplemental goes mainstream,” Google Search Central

In 2007, Google officially removed visible labeling for the Supplemental Index, integrating those pages into the main index. However, the difference between pages of various quality levels probably still exists in Google’s indexing and ranking systems.

“Yes, until 2010 Google maintained two indexes for technical reasons. The main index was updated more often and faster, while the supplemental index was for lower-priority pages that didn’t need to be updated as frequently.”—“Reminder: The Google Supplemental Index Has Not Existed In Over A Decade,” Search Engine Roundtable

Google added only useful, informative, and unique pages to the main index. If a search algorithm evaluated a site’s page as unimportant for the main index, it went into supplemental results. Ukrainian SEO experts even used slang—“Google snot”—to refer to such results.

The supplemental index negatively affected site rankings because pages indexed as secondary were seen as less important. That meant they had lower rankings, were less visible, and received fewer visits.

By 2009, Google was testing a new indexing method—Caffeine—which was fully launched in 2010. That’s when the use of the supplemental index was discontinued.

Supplemental results helped filter out low-quality or duplicate content, such as repeated product descriptions on a site. Even though the supplemental index no longer exists, copied or AI-generated content still has lower priority and harms a website’s visibility in search results.

📌 Read the article: What does the phrase Lorem Ipsum mean?

How Did It Work?

- Google crawled the web using bots to index web content. Its main database stored new and most relevant content.

- Google algorithms decided which content was less important, not matching the audience’s queries or keywords, or outdated. These pages were sent to the secondary index.

- The supplemental index was updated less often than the main one, so those pages had lower priority, rankings, and visibility.

For over 15 years, results from the main and supplemental indexes have been shown together in search results. Meanwhile, Google has become even more focused on content relevance, uniqueness, natural tone, and E-E-A-T principles (Experience, Expertise, Authoritativeness, Trustworthiness).

Why Web Pages Ended Up in the Google Supplemental Index?

There were common mistakes to avoid—even today. Although the supplemental index concept is outdated, almost all the factors that caused pages to end up there still negatively impact SEO.

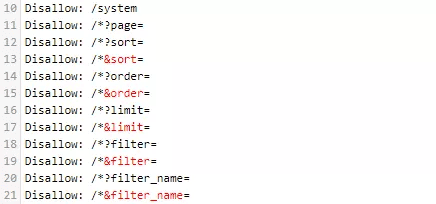

- Duplicate content: Google’s Panda filter is very strict, even with duplicates on the same site. If you can’t fix this now—block non-unique pages from being indexed using robots.txt.

The Disallow directive blocks indexing.

- Poor site structure or complex navigation: This harms both user experience and search engine crawling. Run site audits regularly. If crawlers can’t easily navigate your site, they may skip pages. Ensure your site has an XML sitemap and user-friendly structure.

- Deeply nested pages: If it takes more than four clicks to reach a page, both users and crawlers may avoid it. Simplify your architecture and make important pages more accessible.

- Low PageRank: Improve it by building high-quality backlinks and internal links consistently—from authoritative websites and across your own site.

- Thin content: Don’t publish just a couple of sentences or leave the page empty. Focus on expert-level content. Even simple product or service descriptions should follow writing best practices. Learn to create various formats: text, infographics, video—everything that Google values and users share.

- Rare updates can lead to distrust from search engines. Keep content fresh by adding expert quotes, images, or video to blog posts.

- No external links makes a site seem less trustworthy. Maintain balance—avoid too many affiliate links or linking to shady sites.

- Mismatched page topics also raise red flags, as do AI-generated texts. While “spammy” content was once the main concern, now it’s lack of uniqueness and authenticity. That’s still critical today.

- Missing or duplicate meta tags (title, description) were also reasons pages got pushed into the supplemental index.

Incorrect internal linking and robots.txt settings could cause indexing problems. A site audit can help detect and fix these issues.

📌 Read the article: How to write meta tags: Title, Description, H1

How People Avoided the Supplemental Index

To make sure your pages get recommended in search, you need to work on SEO regularly. The supplemental index wasn’t the only issue site owners faced.

Being consistent in your SEO strategy helps grow organic traffic. Use steps that were once used to avoid the supplemental index:

- Make sure there’s no copied content—replace it with unique articles, images, videos. Include proper meta tags and keywords.

- Check backlink quality and quantity using Google Search Console, Ahrefs (Domain Rating, Backlink Checker), or Moz Spam Score.

- Use the “noindex” tag for pages that don’t need visibility—like a “Privacy Policy”. That way, they won’t impact your SEO metrics.

“You can prevent new content from appearing in search results by adding the URL slug to your robots.txt file. Search engines use these files to understand how to index content. HubSpot’s system domains with hs-sites are always set as no-index in robots.txt.

If your content is already indexed, you can add a ‘noindex’ meta tag to the HTML header. This tells search engines to stop showing it in search results.”—“Prevent content from appearing in search results,” HubSpot

- Optimize internal linking—link to key pages from top-level and second-level pages, and from navigation menus.

- Use 301 redirects for permanently moved pages and canonical tags.

- Site speed is critical. Make sure nothing breaks on mobile, all elements load correctly, and forms are easy to use. A low bounce rate tells search engines your site is helpful.

- Publish high-quality content that meets E-E-A-T standards. Content should be trustworthy, expert-level, authoritative, and show the author’s experience. If your copywriter specializes in a niche field—great. Deep knowledge makes content more unique and valuable.

- Track performance using Google Analytics and Google Search Console—both before and after making changes.

Відстежуйте ефективність за допомогою інструментів Google Analytics і Google Search Console. Робіть це до і після кожної зміни, яку ви вносите на сайт.

The risk of landing in the supplemental index is gone—but search engine evaluation criteria remain. What’s more, we’re now in the age of AI, which affects SEO in new ways.

Content used to just need to be unique and spam-free. Now it also needs to feel natural—not AI-generated. We recommend checking your texts for AI content even if you wrote them yourself.

Emotionless text, especially on popular topics, may be flagged as AI-generated. That’s likely because chatbots pull info from multiple sources—if your sentences resemble many others online, they may be seen as non-original. Check your work in tools like Smodin, GPTZero, AI Detector, or OpenAI.

Conclusions

Until 2007, the supplemental index was a secondary database in search engines. Google stored pages it considered less original or useful, including those rarely updated. The goal was to provide users with more accurate and valuable search results.

In 2006, Google eased site restrictions and improved its crawling and indexing. In 2007, it officially retired the supplemental index. Until 2010, both indexes existed for technical reasons.

Today, Google still evaluates content quality. The supplemental index is gone, but the old criteria still apply—along with new ones like AI-generated content. To rank well, keep publishing original content and build strong backlinks.

FAQ

It was a secondary database used by search engines like Google. While the Supplemental Index no longer exists, the same content quality factors that once pushed pages into it still matter for SEO today.

Ukrainian SEO experts used this slang term because it sounds similar to “Supplemental” and reflects its undesirable status.

No. Google stopped using the supplemental index in 2007 and merged those pages into the main index.